Will Foley

READING TIME: 8 MINUTES

It seems that in this digital age, our boring dystopia as Mark Fisher once called it, we are surrounded and overwhelmed by all manner of technological devices and infrastructure. From our cellphones whose never-ending screens of AI slop, war footage, and poorly cut clips of television shows lock us in trances as we rot in our beds, to man-made horrors beyond our comprehension in the form of autonomous attack drones and self-driving cars that kill people. It is easy to get wrapped up in highly sensational things such as these, so much so that one may fail to consider what even is “the cloud”? I feel like for most, it is seen only as some distant, intangible, and etheric force or locale; an infinite expanse which holds an interminable repository of data, made visible to us only through our phones and computers. In reality, this digital collective unconscious is anchored by some of the most massive, power guzzling technological complexes in human history: hyperscale data centres. If the global internet functions as a sprawling central nervous system, these facilities are the cerebral lobes — monolithic organs designed to interpret, store, and process the unfathomable volumes of data that define modern life. As of February 2026, there are around 288 data centres currently planned or operating in Canada, mainly concentrated in and around the major urban areas of our country.

Data Centre Alley/Data Center Map

As for the United States, they boast the highest population in the world at 4,029, with similar placements as Canada in and around some of their biggest cities. Though, unlike Canada, the majority of their facilities (570 total) are found on the East Coast, specifically in an area of Virginia colloquially known as “Data Center Alley” in Loudoun County and about an hour outside of the Capital. For decades, these facilities have been evolving and growing in number without attracting a lot of attention from the media and the public at large. But, the advent of advanced LLMs (large language models) and the massive scale of the facilities required to power them, coupled with the steadily increasing negative image of artificial intelligence among the public, has brought this phenomenon into the light of day. Naturally, with the increased scrutiny placed on artificial intelligence and data centres, there has been much discussion online regarding the cost of such ventures, both economical and especially ecological as it is becoming clear that the hunger of these facilities requires a level of sustained electrical baseload and hydrological cooling that our planet’s existing resources and infrastructure are simply ill-equipped to handle. Increased residential energy rates, the depletion and/or tainting of freshwater, and both noise and air pollution have been a grim reality for the communities near these facilities. Unfortunately, all of these things may soon become a problem for the people of the Saint John area with the announcement of Beacon AI and the Texas-based Voltagrid’s Spruce Lake Data Centre Hub, due to be operational by 2028, as if we don’t have enough issues regarding pollution and high energy costs already.

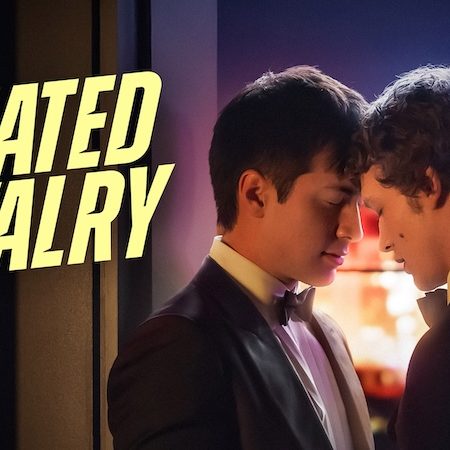

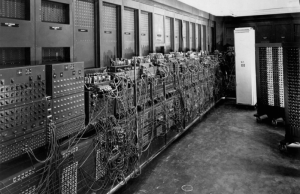

To understand the scale of this threat, one must first come to understand the origins of the data centre. According to IBM, the first data centre in the world was built to house the first computer, developed for the U.S. military at UPenn during the second World War. It was called ENIAC, or the Electrical Numerical Integrator and Computer; a primitive machine of colossal physical size which bears little resemblance to the PCs of the modern day. Throughout the subsequent decades, the barrier for entry into the world of computing shrank lower and lower as computers were scaled down to more manageable sizes and prices. By the 1990s they became mostly commonplace in the workplace and the home. Prior to the 1990s, computing was typically restricted to localized and decentralized networks which were accessed through servers hosted by arrays of various computers working in tandem to provide network access to authorized users. This is a much smaller version of the data centre that still remains to this day, mainly in corporate offices. This was until ISPs (Internet service providers) came onto the scene to provide access to the internet for all who could pay for it. Thus, the disparate digital fiefdoms beyond our physical world were brought together to form a far more familiar, centralized internet. This transition, later bolstered by the popularization of cloud computing around the mid to late 2000s, led to the development of highly sophisticated facilities to power cloud-based digital infrastructure for the big players in the tech scene. In essence, this was the genesis of the hyperscale data centre: the vast and rigorously maintained temples wherein thousands of servers hum in unison as they process and store collections of data of measureless to man, and commune with other such machines in distant lands, upon submarine threads of gossamer.

The ENIAC at UPenn/Sims Lifecycle Services

Naturally, given the incredible power of these hyperscale data centres, businesses focused on AI development have taken a keen interest. So much so that the proliferation of data centres worldwide is driven primarily by investments in specialized campuses with sufficient computing power to both train AI models and serve the needs of their users. These facilities require an incredible amount of expensive equipment, such as thousands of specialized GPU (graphics processing unit) chips designed to process intake data at a rapid rate for the sustained periods required for around the clock service. Due to the powerful nature of these chips, they tend to generate a great amount of heat as they run. This necessitates the use of elaborate, perpetually operational cooling systems that, when coupled with the power needed just to run the systems which house the chips and the architecture which houses all of the equipment, results in facilities that just devour energy.

1990s Server Room/opendirectories, tumblr

According to Statista, the average power capacity of a hyperscale data centre rests somewhere around 100 MW, which is equivalent to the power consumption of a large town. There are three planned facilities by Beacon AI and Voltagrid in Alberta that are each due to have a 400 MW capacity, which is equivalent to the power consumption of an entire city. Much of this energy, at least in the U.S., tends to be derived from power plants of the heinous fossil fuel variety (around 56% to be exact). Funnily enough, the aforementioned company Voltagrid, helmed by the Canadian expatriate Nathan Ough, is set to utilize natural gas power systems in their facilities overseas, manufactured by the Halliburton Company, a company once helmed by one of the preeminent profiteers of the Iraq War, Dick Cheney. Despite some U.S. data centres boasting on-site power storage and/or generation in one form or another, the vast majority heartily sup like leeches from the withered flesh of overtaxed local power grids. This has been a huge issue in some areas, especially the “Data Center Alley” in Virginia, which nearly faced a total blackout when 60 data centres simultaneously disconnected from the grid in 2024. Apart from blackouts, the denizens of Northern Virginia and Baltimore have also seen substantial increases in their monthly power bills: for many the cost has actually doubled.

Coal Power Plant/Pixabay

As an anodyne to relieve some of the strain placed upon American power grids by these data centres, the Trump administration has made moves to revitalize coal and natural gas plants after Biden’s attempts to curb emissions, by phasing out these relics of a filthy past with amendments made to the MATS (Mercury and Air Toxics Standards) ruling. However, on the “FACT SHEET” webpage for Trump’s initiative, there is only one mention of data centres, in regard to the demands for compensation for a “new generation built on their behalf”. One would think that there would be more ire directed their way, but it seems not. It is highly likely that this move is a short-sighted, short-term solution to maintain American supremacy in the international AI arms race under the guise of providing “affordable, dependable energy for American families”. I don’t know, sounds like more pandering Reaganesque gobbledygook to me.

Spruce Lake Data Centre concept art/Beacon AI

While the hyperscale data centre’s boundless hunger for energy is incredibly concerning, there is also the matter of water, which is commonly used to cool the continuously operational technological infrastructure of these facilities. It seems that one of the more common methods of cooling comes in the form of cooling towers, which utilize evaporation to process the hot air and cool the machines. This tends to be very wasteful as approximately 80% of the water used by data centres for this application simply turns to vapour and disappears into the sky. This is made even worse given the fact that potable freshwater is needed for cooling applications, as it typically will not leave behind anything that will harm the sensitive components within the facilities (such as the corrosion concerns that come with the high salinity of seawater). Unfortunately, as one may know if they have ever examined a map of the world before, around 97% of the world’s water is found in the oceans and seas, while only 3% is freshwater, and 0.5% of this freshwater is potable. Therefore, some companies end up having to tap into subterranean aquifers or municipal drinking water, both quite bad but for different reasons. There are other options for cooling such as the closed loop method of liquid cooling, which continuously circulates water or synthetic coolant through plumbing around the warm components. This method is becoming increasingly common in high end gaming PCs, albeit at a much smaller scale; it comes with the benefit of far less continuous use of fresh potable water and such systems tend to require less maintenance.

The proposed Voltagrid and Beacon AI 380 MW hyperscale data centre project in the controversial Spruce Lake Industrial Park brings these external global anxieties to our doorstep at a very bad time. This is due to the fact that those who live in the surrounding area have already expressed great fury at the proposed and eventually approved expansion, which they are worried will negatively impact the local wetland and ancient wood in the area. And despite the promises of transparency and 1,300 new jobs (though only 210 are due to remain after construction) given at an open house event in Lorneville in November of 2025, certain individuals such as Adam Wilkins still felt uncomfortable due to the lack of answers regarding exactly how much water would be used, especially since Saint John just experienced its driest summer on record in 2025. I’d wager that they are right to be worried about the impact the facility may have on the water supply, given the amount of freshwater typically required for such ventures. This is especially true if they go with an evaporation-based cooling system, though Ough did make the distinction that the centre would consume “nearly zero water”. Unfortunately, the community cannot be certain as Ough seems to speak in a somewhat equivocal manner in regard to the matter of water — a common trope for the guileful and cajoling businessman or industrialist. Additionally, Ough and Co. plan to construct an allegedly low emission on-site fossil fuel generator which will supply half of the facility’s power, while the other 190 MW is due to be supplied by NB Power. Unfortunately, as with Baltimore and Loudoun County, adding such a high capacity facility will likely exacerbate the already worrying trend of high power rate hikes by NB Power over the past three years, which is due to increase once again in April 2026 by 4.75%. It would be a great shame if this potential cost is passed on to the consumer, as we truly do not need yet another big corporation receiving special treatment over the common man, like letting another fox into the henhouse so he can raise hell as he pleases.

Now we see… now we see that the “cloud” is revealed to be no ethereal cluster of intangible data, but a leviathan; a vast intelligence wrought from steel, concrete, copper, and silicon, whose boundless appetite demands the sacrifice of our natural resources, while we languish in digital trances, eyes aflame with LCD (liquid crystal display) dreams. In our desperate, Promethean pursuit of the synthetic intellect of the advanced LLM, we have birthed inert eldritch abominations that heartily drain our energy and sup from our springs, then belch great clouds of vapour into the unfeeling sky; as we lay shrivelled upon the blasted heath, unable to set our bones in motion, let alone our minds.